Analyzing language nuances to understand customer sentiment on product performance, functionality and features. With that context, we can confidently say that an automated and intelligent mechanism for transforming natural text data into a standardized format has plenty of applications, no matter your business function or your industry. Additionally, modern data platforms such as data lake and data lakehouse technologies also apply a schema structure based on tooling specifications at the analysis stage (schema-on-read). Most traditional data platforms using data warehouse systems require preprocessing of information to adopt an established schema structure. The important element of text mining is to produce knowledge from distributed and isolated sources of data across structured, unstructured and semi-structured formats. In order to transform text-based big data into meaningful information and - eventually - actionable knowledge, text mining procedures may include: Research suggests that 80% of business data consists of unstructured text data. Other names for this practice include text data mining and text analytics. The concept of text mining is similar to that of data mining, except that text mining is focused only on text that can be interpreted as natural language given a specific structural format, such as documents, materials and information resources that contain unstructured text data.

The goal of text mining is to discover meaningful insights and patterns, as well as unknown information based on contextual knowledge. So, in this article, let’s take a look at how text mining works, use cases for it - and how it can uncover meanings and patterns that traditional approaches cannot. This mined information can then be used to:Ī subset of data mining, text mining is particularly focused on documents, materials and information resources that contain unstructured text data. Mining typically relies on a unique combination of machine learning, statistics and linguistics.

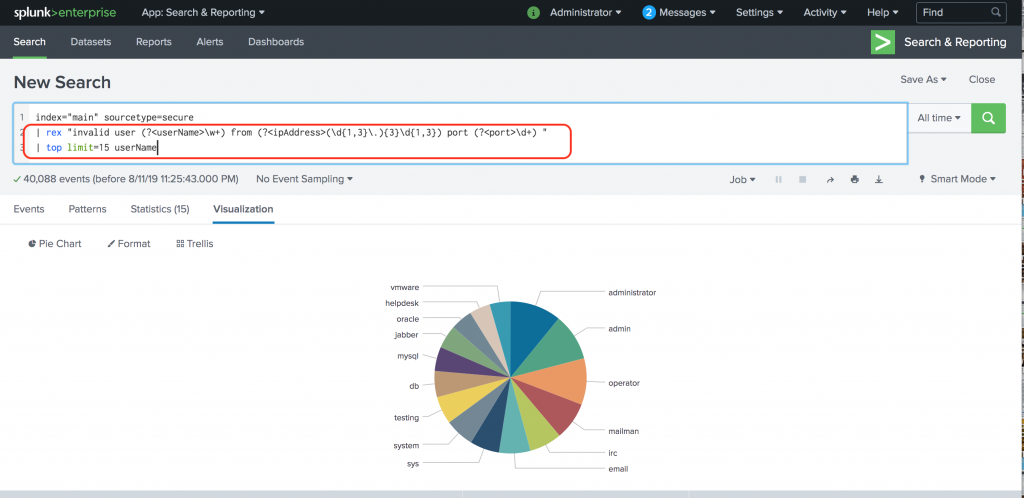

Replay any dataset to Splunk Enterprise by using our replay.py tool or the UI.Text mining is the practice of extracting and transforming unstructured text data into structured text information. Initial Confidence and Impact is set by the analytic author. The Risk Score is calculated by the following formula: Risk Score = (Impact * Confidence/100). As these are identified, update rare_process_allow_list_local.csv to filter them out of your search results. Some legitimate processes may be only rarely executed in your environment. You can modify the limit parameter and search scheduling to better suit your environment. If you wish to remove an entry from the default lookup file, you will have to modify the macro itself to set the allow_list value for that process to false. To add your own processes to the allow list, add them to rare_process_allow_list_local.csv. These consist of rare_process_allow_list_default.csv and rare_process_allow_list_local.csv. The macro filter_rare_process_allow_list searches two lookup files for allowed processes. To successfully implement this search, you must be ingesting data that records process activity from your hosts and populating the Endpoint data model with the resultant dataset. List of fields required to use this analytic. It allows the user to filter out any results (false positives) without editing the SPL. | rename Processes.process_name as processĭetect_rare_executables_filter is a empty macro by default. | tstats `security_content_summariesonly` count values(st) as dest values(er) as user min(_time) as firstTime max(_time) as lastTime from datamodel=Endpoint.Processes by Processes.process_name

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed